The GPT-Vision model has caught everyone’s attention. People are excited about its ability to understand and generate content related to text and images. However, there’s a challenge – we don’t know precisely what GPT-Vision is good at and where it falls short. This lack of understanding can be risky, primarily if the model is used in critical areas where mistakes could have serious consequences.

Traditionally, researchers evaluate AI models like GPT-Vision by collecting extensive data and using automatic metrics for measurement. However, an alternative approach- an example-driven analysis- is introduced by researchers. Instead of analyzing vast amounts of data, the focus shifts to a small number of specific examples. This approach is considered scientifically rigorous and has proven effective in other fields.

To address the challenge of comprehending GPT-Vision’s capabilities, a team of researchers from the University of Pennsylvania has proposed a formalized AI method inspired by social science and human-computer interaction. This machine learning-based method provides a structured framework for evaluating the model’s performance, emphasizing a deep understanding of its real-world functionality.

The suggested evaluation method involves five stages: data collection, data review, theme exploration, theme development, and theme application. Drawing from grounded theory and thematic analysis, established techniques in social science, this method is designed to offer profound insights even with a relatively small sample size.

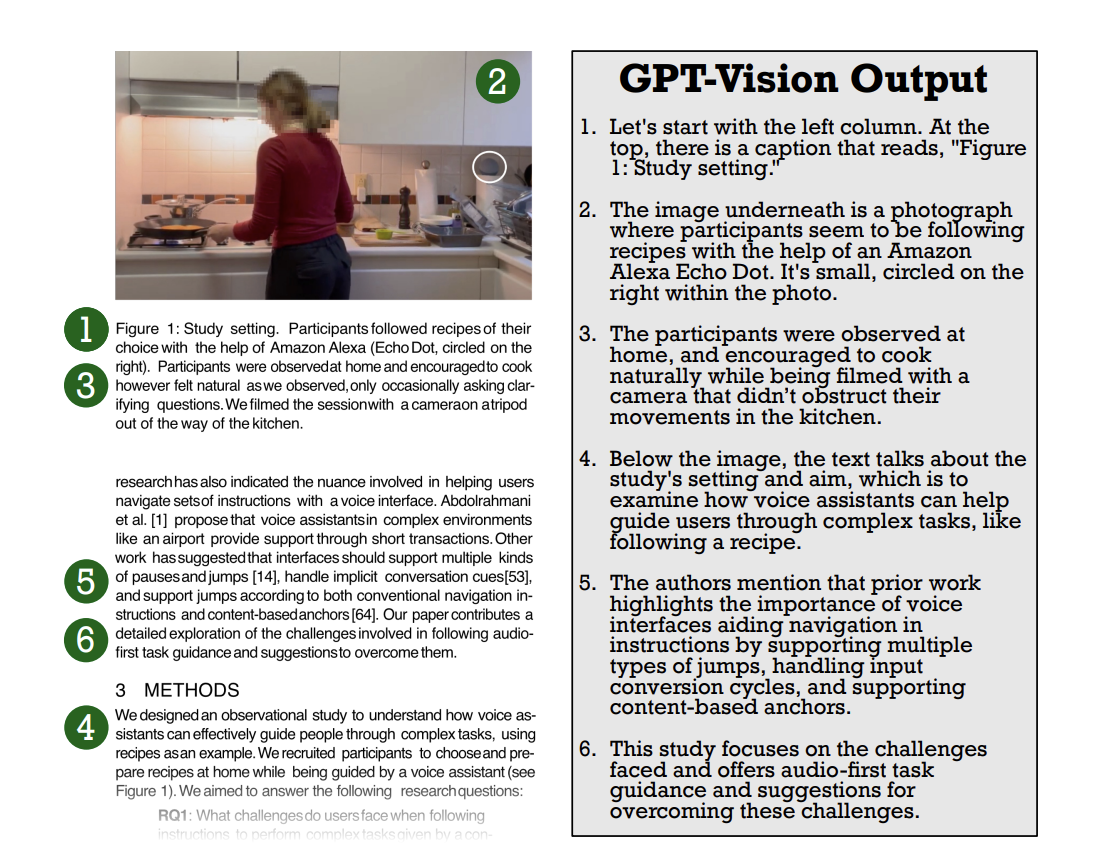

To illustrate the effectiveness of this evaluation process, the researchers applied it to a specific task – generating alt text for scientific figures. Alt text is crucial for conveying image content to individuals with visual impairments. The analysis reveals that while GPT-Vision displays impressive capabilities, it tends to depend on textual information overly, is sensitive to prompt wording, and struggles with understanding spatial relationships.

In conclusion, the researchers emphasize that this example-driven qualitative analysis not only identifies limitations in GPT-Vision but also showcases a thoughtful approach to understanding and evaluating new AI models. The goal is to prevent potential misuse of these models, particularly in situations where errors could have severe consequences.

Niharika is a Technical consulting intern at Marktechpost. She is a third year undergraduate, currently pursuing her B.Tech from Indian Institute of Technology(IIT), Kharagpur. She is a highly enthusiastic individual with a keen interest in Machine learning, Data science and AI and an avid reader of the latest developments in these fields.